ExpandED Schools partners with schools, districts, and community-based organizations (CBOs) to help develop and support expanded learning time programs. We also work to continuously improve the learning experience for students. One of the ways we do this is through data-driven decision making—analyzing evidence to inform programmatic choices to improve the learning experience.

Five years ago, we set out to pilot an expanded learning time model and launched five demonstration sites in New York City. At that size, a single member of our research team could regularly participate in on-site data meetings. (The demonstration also included six other schools in Baltimore and New Orleans.) Over the course of the demonstration and beyond, ExpandED Schools grew to nearly 50 sites, which made it impossible for a single research staff member to provide the same level of attention to data. We needed to create a process that took advantage of a small research team to provide data-driven decision-making support for a large network of school-community partnerships. But how would that work?

Our answer: Create a new approach in which program managers, rather than research staff, would take a bigger role in understanding and communicating the implications of the organization's research and data. This new process, if successful, would relieve the need to hire more research staff and would teach more employees new skills to better serve their partners.

Building the Approach

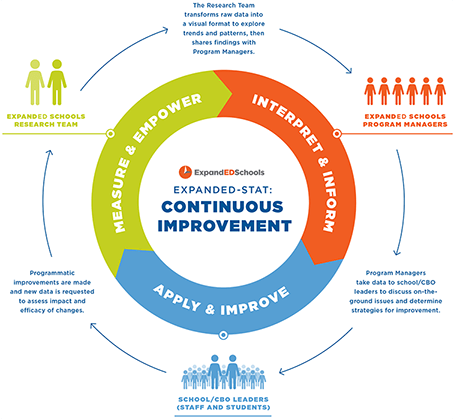

To achieve this goal, ExpandED Schools launched a five-step, continuous-improvement process in the fall of 2013 called ExpandED-Stat to develop the skills of program managers to serve as data translators and coaches. The steps include:

- Measure

- Empower

- Interpret

- Inform

- Apply and Improve

Here's how it works.

Step 1: Measure

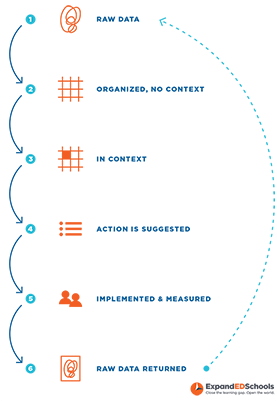

A cycle begins with student- or school-level data around long-term, success-predicting measures, such as attendance and enrollment, academic assessment, social-emotional learning, school climate, and others. The research team then transforms the raw data into a visual format that focuses on important findings within an individual school, making it more accessible to those without strong data backgrounds. The team can also explore trends and patterns in the data across the network of schools they work with, which can help facilitate group discussions.

Step 2: Empower

The research team then hosts a monthly meeting with the 10 or so program managers who oversee the ExpandED Schools network to help them better understand how to use the data. At this meeting, program managers view a sample school's data and receive coaching on how to read and share relevant findings with their school teams.

Step 3: Interpret

In addition to coaching on how to read the data, the research team uses the patterns and themes identified across our network of schools to facilitate discussion groups among program managers. Doing so allows program managers facing similar challenges to discuss strategies, as well as to hear from managers at sites not facing such challenges in order to glean best practices. "Hearing from my peers helped me to think about what other questions could come up at meetings, along with how to address certain conversations in a neutral and constructive manner," Program Manager Natalie Colon said.

Step 4: Inform

In the field, program managers take on the role of coach, holding meetings with their school teams to discuss the data and to continue brainstorming actions within the context of what schools and their partner community organizations experience day to day. As Program Manager Domingo Cruz shared, "Having the data packets and information helped to cement me as a stakeholder on the team in supporting quality." Program managers also solicit feedback from school and CBO leaders as to what additional data they would like to see analyzed to help them assess the impact of their programs.

Step 5: Apply and Improve

Connecting the data to the day-to-day recently played out for Jacques Noisette, a program manager working closely with six middle schools in Queens and Brooklyn. Attendance data showed dramatically lower attendance at one of his sites during the winter months. Yet in the breakout session with other program managers, it became clear that this trend wasn't happening at other schools, even in the same district. "I took this information to school leaders," Noisette said, "and in doing so, discovered that the bus stop was 10 blocks away from the school. Parents didn't want their children walking that distance in the dark at the end of the expanded school day."

The school used the attendance data to justify assigning a staff member to walk the students to the bus in the months when it got dark early. The next year, new data was collected and analyzed, and attendance records during winter showed an improvement.

Once a site has made improvements based on the data it has been given, the research team assesses the efficacy of such improvements and collects new sources of data, beginning the cycle again.

Since implementing ExpandED-Stat, we've experienced a number of positive changes including:

- The ability to implement data-driven decision making across all sites without significantly changing staff capacity,

- The ability to uncover more systemic patterns that aren't possible when focusing on the individual school level,

- Program managers with increased confidence in using data and serving as active thought partners within the network, and

- Enhanced partnerships between program managers and schools and their community partners.

The ExpandED-Stat process also has had an effect beyond ExpandED Schools sites. We have offered insights to schools across the country. For example, some of the data had revealed a troubling decline in attendance during the month of June across many of our schools, which also happens to be a nationwide trend. By examining the schools that did not show this drop, ExpandED Schools was able to develop a resource brief highlighting best practices that could be used by schools everywhere.

To other nonprofits that face an opportunity to scale but lack the resources to do so, we suggest considering the following questions to determine whether your organization has a hidden capacity to meet greater demand:

Do you have an opportunity to cross train staff? Our program managers work with schools and community organizations in our network on a daily basis. While they have teaching or related experience in the education field, they do not have research or evaluation backgrounds. In a world where data-driven decision making was becoming ever more important, it seemed highly relevant to develop them to take on this role within our organization.

Do you have staff members who can impart new skills? The research team needed to be able to deliver information to the program managers in a way that empowered them as leaders and not just messengers. It is important to recognize the existing capacities of your staff and ensure that the training you provide is appropriate to their current skill set (though realizing that this skill set can change/develop over time).

Can a work process be refined or created? While the process clearly empowered the program managers, it also had to work for our research staff as a tool for continuous improvement. Building an approach that was sustainable for the research team was an imperative.

Is your organization willing to be collaborative and flexible? Scaling an initiative takes a fair amount of change management. It is important that all parties recognize the value of the process. Understanding where there may be sticking points and being willing to pivot when something doesn't work can determine whether your efforts will be a success.

Katie Brohawn, PhD director of Research at ExpandED Schools where she helps establish research and evaluation priorities in order to raise service quality, inform policy, and support the organization to make data and research-driven continuous improvement. She writes the organization's NeuroConnections blog series, which focuses on how research in the field of biology/neuroscience can be applied to the expanded learning space.